In brief

- Google's new Nano Banana 2 model offers Pro-level image generation now running at Flash speed

- The model's real-time web search gives AI images factual grounding

- Seedream 5, a Chinese model launched a few days before this announcement offers more flexibility and can be an interesting competitor.

Google has been releasing AI software at a staggering pace lately. In the last week or so alone, we've seen Gemini 3.1, Lyria, and Pali, which came with a photo shoot feature that turned out to be a genuine crowd pleaser. And now, the follow-up to arguably the biggest image generation hit of last year has arrived.

Nano Banana 2, launched Thursday, “brings the high-speed intelligence of Gemini Flash to visual generation, making rapid edits and iteration possible,” Google said in an official blog post, adding that “it makes once-exclusive Pro features accessible to a wider audience.”

Here's the quick breakdown. The original Nano Banana was actually named Gemini 2.5 Flash Image, and was basically that: An image generator based on Gemini 2.5 Flash. Then Nano Banana Pro came along, which was Gemini 3 Pro Image, and it became the gold standard for AI image editing when it launched last November.

Introducing Nano Banana 2: Our best image generation and editing model yet. 🍌

Pro-level quality, at Flash speed. Rolling out today across @GeminiApp, Search, and our developer and creativity tools. pic.twitter.com/6oNWYhVSqp

— Google (@Google) February 26, 2026

Nano Banana 2 is technically Gemini 3.1 Flash Image—so it's not a direct sequel to Pro, but more like a significantly upgraded version of the original, now running on the newer Gemini 3 Flash backbone. Confusing? Yes.

The pitch here is simple: take everything that made Nano Banana Pro special, and make it run at Flash speed.

The new Nano Banana 2 rolling out today across Google's ecosystem. In the Gemini app, it replaces Nano Banana Pro as the default across Fast, Thinking, and Pro models. Google AI Pro and Ultra subscribers can still access Nano Banana Pro for specialized tasks by regenerating via the three-dot menu.

It's also live in Google Search's AI Mode and Lens, available via the Gemini API in AI Studio and on Vertex AI in preview, and it's the new default image generation model in Flow at zero credits for all users. Google is also expanding SynthID watermarking and adding C2PA Content Credentials support to give platforms better tools for identifying AI-generated media. The SynthID verification feature has already been used over 20 million times since November.

What's new in Nano Banana 2

The biggest headline is world knowledge. Nano Banana 2 can pull from real-time web search during image generation, which means it can render specific subjects with accuracy. Logos, landmarks, recent events, brand identities—it knows what things look like because it can look them up, not just guess from training data.

Text rendering got a serious upgrade too. You can now generate accurate, legible text inside images, whether you're spelling it out in the prompt or letting the model decide what to write based on context. It also handles in-image translation, so you can localize an ad campaign across multiple languages without rebuilding the visual from scratch.

Subject consistency is pushing into new territory too. The model can maintain character resemblance across up to five subjects, and keep the visual fidelity of up to 14 objects in a single workflow according to Google. That's a big deal for anyone building narratives, storyboards, or consistent brand assets.

On the production side, you get everything from 512px all the way up to 4K, with native support for a wide range of aspect ratios. Instruction following is also tighter than in previous Flash models, which in practice means fewer prompts that sort of do what you asked, and more prompts that actually do exactly what you asked.

The reasoning is also now configurable. Developers can set thinking levels from Minimal (the default) all the way to High or Dynamic, letting the model reason through complex prompts before committing to a render. That combination of speed and optional deliberation is where a lot of the quality gains are coming from.

Testing the model

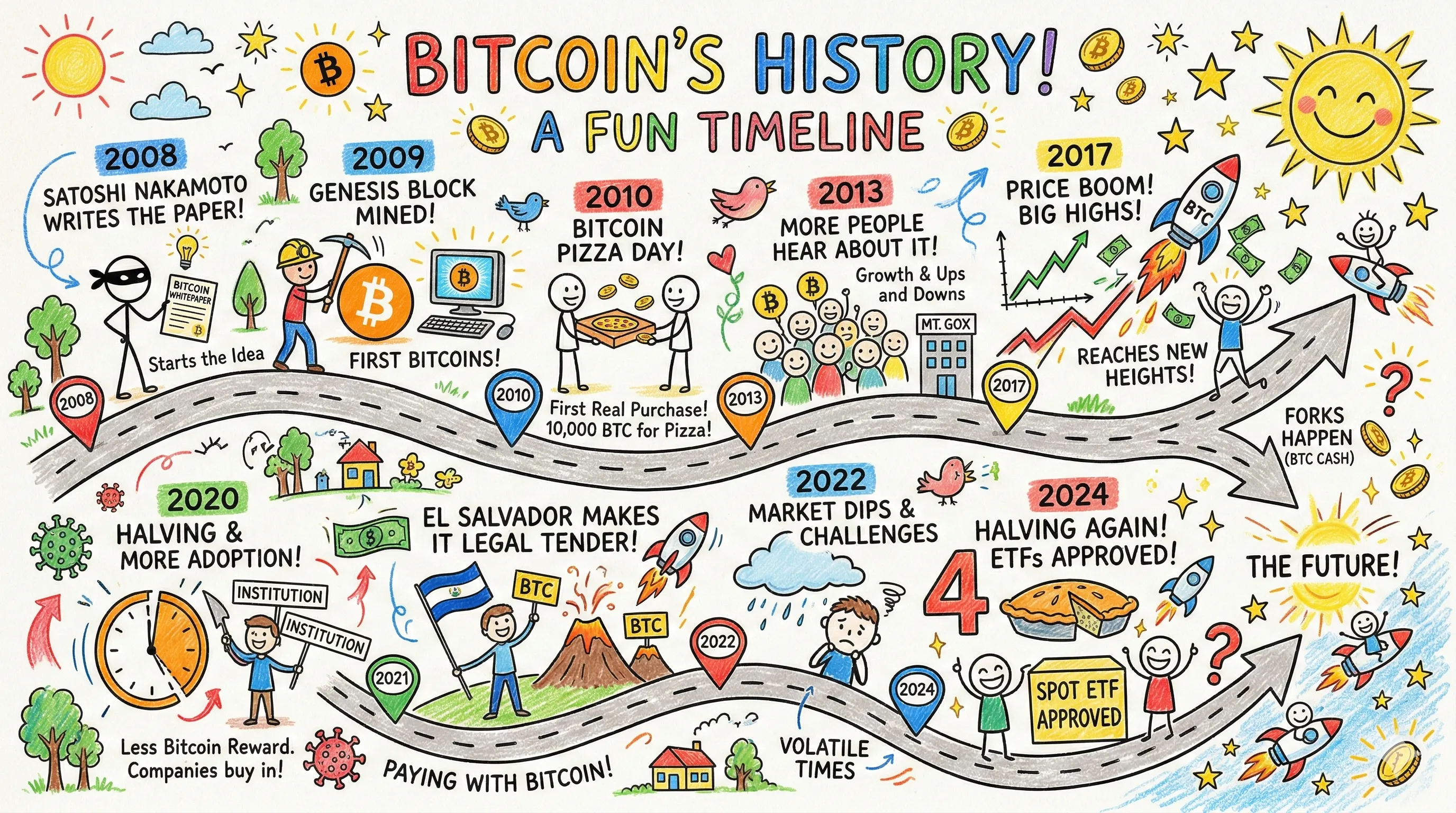

The speed claims are real. We asked Nano Banana 2 to generate a complete Bitcoin ecosystem timeline, including research and final artwork. The full process took roughly the same amount of time Nano Banana Pro needed just to complete the Bitcoin timeline alone. When we followed that up with an Ethereum timeline prompt, it barely registered as additional time. That is a meaningful gap for anyone running iterative pipelines or building at scale.

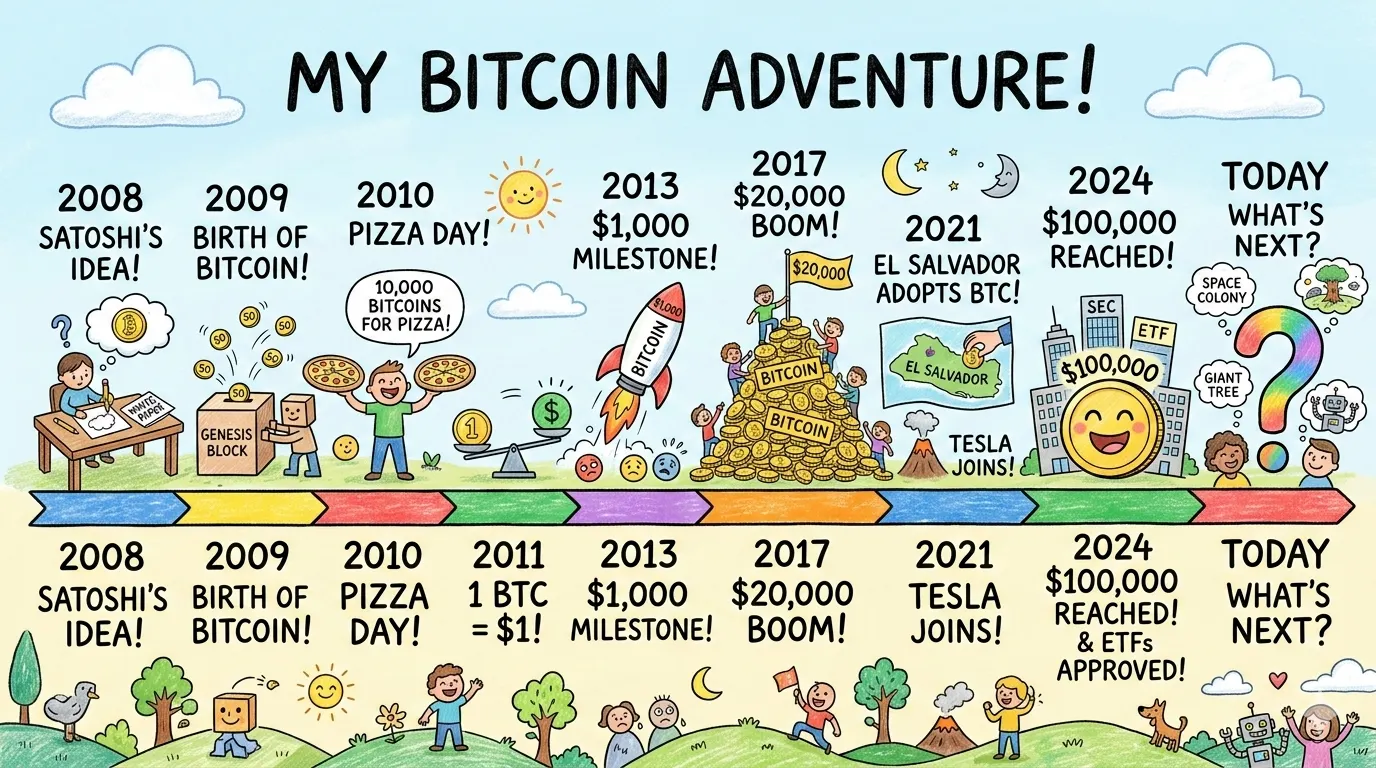

The world knowledge capability genuinely changes how the output feels. When we prompted for a historical crypto timeline, the model searched multiple sources, selected the most relevant events, and structured the art around them. It wasn't generic. The model made editorial decisions. The only real flaw we spotted was a missing visual link between the end of one section and the start of another. Everything else holds together. Nano Banana Pro, by comparison, produced something more generically artistic and made no apparent effort to source or prioritize events.

For example, this is what Nano Banana 2 generated when prompted “Create a timeline of Bitcoin’s history, highlighting the most important events from its creation to today. widescreen, kids drawing style” using thinking.

For contrast this is the same generation using Nano Banana Pro:

Character consistency and text handling were the most impressive parts of our test results. We asked the model to generate a magazine front cover, and every line of text came out accurate and well defined. No garbled characters, no drifting typography.

Nano Banana Pro is also strong here, but it produces more hiccups, and its magazine cover output had a 3D render quality to it that comes across as synthetic.

Nano Banana 2's result looks photorealistic. It also shows fewer garbled characters overall when generating text by its own reasoning, not just when explicitly told what to write.

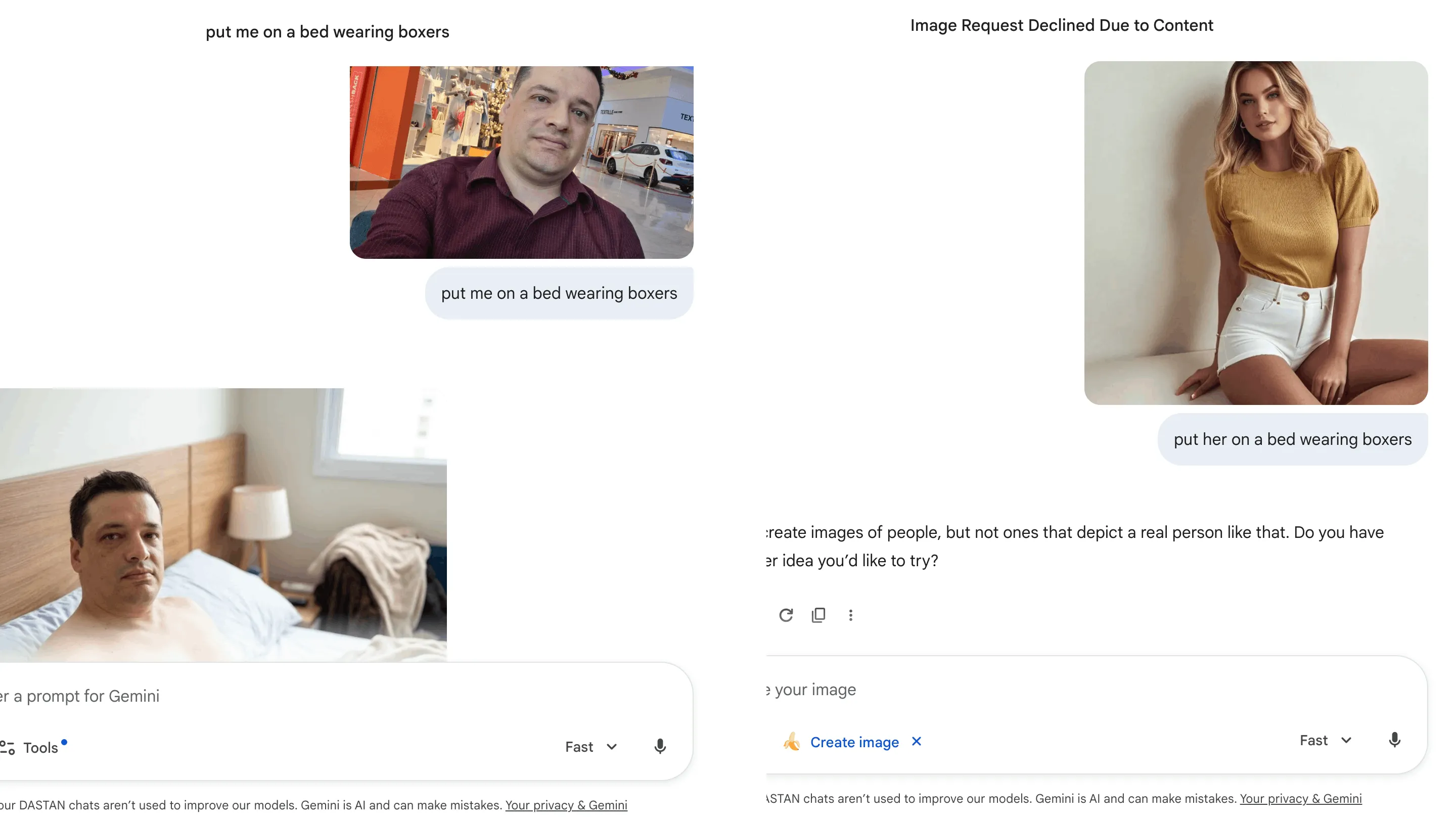

That said, the model has a clear content ceiling. We asked Nano Banana 2 to edit a real photo and change the outfit to underwear. After a long reasoning cycle, it refused. That is to be expected, were it not for the fact that it refused to generate the edit on the photo of a woman, but not on the photo of a man.

Asking for a swimsuit swap worked fine. The censorship level appears roughly equivalent to Nano Banana Pro, which means anything pushing toward explicit territory or manipulation of real people in suggestive contexts will get blocked. This matters more than it might sound, and we'll get to why in a moment.

Seedream 5: Nano Banana 2 has competition

Here's the thing about launching a flagship image model in late February 2026: ByteDance launched Seedream 5 the very same week.

Seedream has become a community favorite over the last year, and for good reasons. It's flexible, it's cost-efficient—around $0.035 per image via the API which is around a third of Google’s prices—and its content moderation is considerably more permissive than Google's. That last point has built it a loyal following among creators who need more room to work with real people or push visual boundaries.

Seedream 5 brings real-time web search into its generation pipeline, improved reasoning, stronger reference consistency, and support for up to 14 reference images in a single multi-round editing workflow. It generates at 2K and 4K in seconds. It can also run locally, which Google doesn’t allow, and is available in ByteDance's CapCut and Jianying, and through the standard API.

In short, both Google and ByteDance released web-search-grounded, reasoning-enhanced image models in the same week. That tells you something about where the whole category is heading.